- Blog

- About

- Contact

- Gta vice city mobile wallpaper

- Download facebook versi 4

- Muhammad the messenger of god full movie wiki

- Honda hds tool rental

- Find gmail hacker online group

- Metallica guitar rig 5 presets

- Alan jackson little man

- Jay abraham book

- Harvest moon ps4

- Creatte usb installer dmg from windowds

- Spider man pc game torrent

- Battle chess games

- Free gpg dragon box cracked

- Dinero infinito test drive unlimited 2 pc

- A combination check is a data validation check that

- Rune factory 4 character likes

- Sonic and sega all stars racing transformed ps3

- Total 3d home design deluxe demo

- Wii u scribblenauts unmasked

- What are wattpad stories

- A combination check is a data validation check that how to#

- A combination check is a data validation check that code#

A combination check is a data validation check that code#

This is the code used in the If Metadata’).output.structure, The example code only shows two columns, and this can be extended as required.

A combination check is a data validation check that how to#

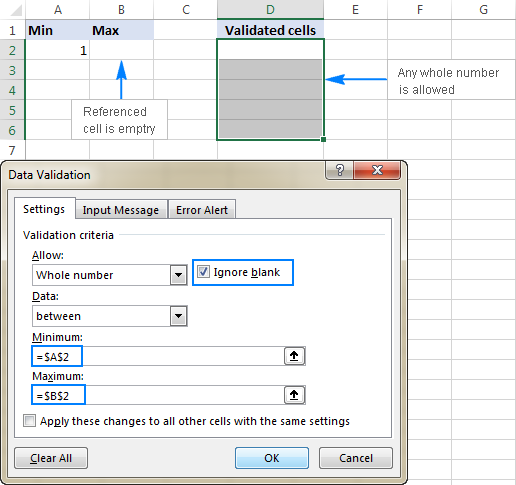

Method 2: Validate using codeĪn alternate solution that I discovered when I was researching how to do this, was using an array to store the controlled schema as text and using the ADF json and createArray functions to create the correct structure.

Depending upon to result of the IF condition, use the ADF Copy Data activity to copy the file to the RAW zone (accepting the file) or to the Quarantine zone (rejecting the file).įor a series of files, you would need to use the ADF ForEach activity to loop through the list of files.The equals formula compares two objects, not just the content that the object contains. This is the logic to use in the If Metadata1’).output.structure,activity(‘Get Metadata2’).output.structure) Use the ADF If Condition to compare the metadata structures.In my example, I have used a file already in storage as the controlled schema to compare to the incoming files. Compare to a known schema that we are expecting.The files will need to be stored in an Azure storage account. Retrieve the metadata from the newly ingested files using the ADF Get Metadata We will use the Structure attribute which will return a list of column names and column types in JSON format.So, this method simply validates new files by comparing to a known file. File 1 would be a new file that has been stored and File 2 would represent our controlled schema file. My initial approach was to compare 2 files directly. I will discuss the method I typically use and two other variations that I tested that may also interest you. Now that we have established that it is a great idea to validate the files, let’s look at how you can achieve this using ADF. Why would we need to validate files? There are several reasons. The specific part of this framework that I will discuss here, which will make a data lake more robust, are 3 variations of a technique to validate the structure of the files using Azure Data Factory (ADF). It also doubles as a best practice design for the consultants to get the build done efficiently, whilst also avoiding traps that we have encountered in the past. Our framework is an important starting point for discussions with our clients. How To Validate Data Lake Files Using Azure Data Factoryīy Garry Farrell, Managing Consultant- Altis Sydneyĭata lakes are very popular, and I have been helping to extend the framework we use with our clients.